How to Find B2B Leads on GitHub (Companies Using Specific Repos)

Almost nobody writes about GitHub as a B2B lead source. The good signals sit below the surface: the dependents graph, manifest mining, Sigstore release attestations, and committer-email-domain mapping. Every GitHub-derived lead needs a corroborating non-GitHub signal before it ships.

Almost nobody writes about GitHub as a B2B lead source, which is strange because every devtool seller I know quietly maintains a private list of companies caught using their competitor's SDK in a public package.json. The reason it doesn't surface in the usual prospecting playbooks is partly that the good signals are several layers below the surface (stars are noise, forks are slightly less noise, the real data is in the dependency graph and the release attestations) and partly that pulling them out requires writing code, not running a saved Sales Navigator filter. The SEO competition on this topic is near-zero. The sales-tooling vendors who do mine GitHub treat the technique as proprietary. So this post is the practitioner's note I wish I'd had when I started.

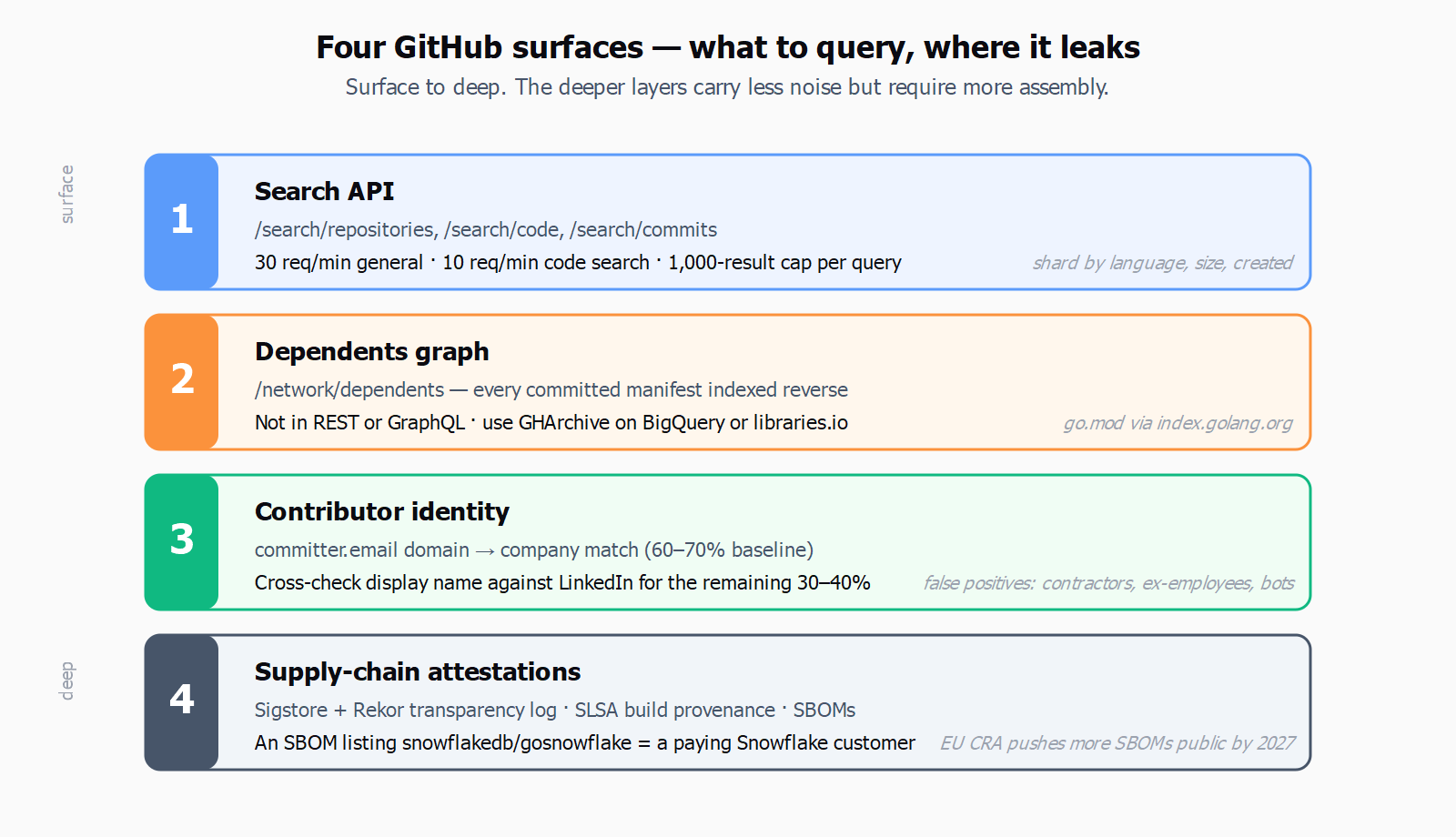

The first useful surface is the GitHub Search API, and the most important thing to internalize is that it is two rate-limited APIs glued together. The general search endpoints - /search/repositories, /search/issues, /search/users, /search/topics, /search/commits - allow up to 30 requests per minute for an authenticated caller. The /search/code endpoint, which is the one you actually want for "find every public repo whose package.json imports @openai/sdk," is throttled separately at around 10 requests per minute. The /rate_limit endpoint exposes both buckets under resources.search and resources.code_search, and you should poll it before any batch run because they decay independently. Every search endpoint also caps the result set at 1,000 items per query (paged 100 at a time), so a query that would return 50,000 hits has to be sharded - usually by language:, size:, or created: ranges - to actually retrieve the long tail. None of this is hidden, but it shapes the entire workflow: code search is precious, repo search is cheap, and you plan around that asymmetry.

The second surface, and the more interesting one for B2B prospecting, is the dependents graph at github.com/<owner>/<repo>/network/dependents. GitHub parses every committed manifest - package.json, go.mod, requirements.txt, Gemfile.lock, Cargo.toml, pom.xml, composer.json - and indexes the reverse edge. The dependents view for a widely-deployed infra repo like kubernetes/kubernetes or apache/kafka typically lists hundreds of thousands of public repositories. The catch (and this is important): this data is not in the REST or GraphQL API. There is a long-standing community thread asking GitHub to expose it; nothing has shipped. The only way to read it programmatically today is to render the HTML page, which is brittle and sits awkwardly against GitHub's terms. The realistic alternatives are the GHArchive event firehose on BigQuery and the libraries.io snapshot, both of which let you reconstruct "which public repos declare a dependency on package X" without scraping. The third option, when the technology you're hunting is published as a Go module, is the public Go module index at index.golang.org, which is a fully cooperative dataset.

Once you have the list of repositories using a given technology, the third surface is contributor identity. GitHub commits expose an author email when the user hasn't toggled email privacy, and the simple rule "if committer.email ends in @stripe.com and the repo is not stripe/*, the committer plausibly works at Stripe" gets you 60-70% of the way to a company-level lead. The remaining 30-40% is fixed by cross-checking the GitHub display name against LinkedIn, which is the same trick the open-source-listening tools (Common Room, Champify, Orbit's successor projects) use under the hood. False positives: contractors using a client email on a personal machine, ex-employees on a stale git config, and bots. None are fatal if you're scoring rather than firing one-shot.

The fourth and newest surface is the supply-chain attestation layer. Sigstore signs releases with short-lived OIDC-bound certificates and writes every signing event to the public Rekor transparency log; the log is queryable and tells you which GitHub Actions workflow on which org/repo signed which artifact at which timestamp. SLSA build-provenance attestations go further and let you reconstruct the toolchain. And because the EU Cyber Resilience Act starts requiring vulnerability disclosure in September 2026 and full SBOM delivery by December 2027, more vendors are publishing SBOMs alongside releases - which is unusually generous for prospecting purposes, because an SBOM is just a machine-readable list of every transitive dependency, including the paid ones. An SBOM that lists cloud.google.com/go/bigquery or github.com/snowflakedb/gosnowflake is a near-certain signal that the publishing organization is a paying GCP or Snowflake customer. None of this requires guesswork; it's all on disk in the release artifact.

Common Room's GitHub listening is one of our secret weapons at Temporal - it gives us tons of insights we wouldn't have otherwise.

- Tim Hughes, VP of Sales, Temporal

Hughes is selling a workflow engine to the exact audience that lives on GitHub, so the testimonial is partly a marketing line and partly an honest read of where the signal is. What's worth noting is the framing - "one of our secret weapons" rather than "our pipeline" - because GitHub-derived leads behave more like an informant than a list. They tell you which companies have engineers actively touching the relevant primitives, which is enormously valuable as one input to an account scoring model and almost useless as a single-source list to dump into a sequencer.

This is exactly the kind of problem that sits at the seam between discovery and enrichment. We think about it a lot at Leadex because the platform's open-web search treats GitHub as one source among many - alongside company sites, LinkedIn, Crunchbase, and news - rather than as the master list. A brief like "Series B data-infra companies whose engineers committed to repos using apache/kafka in the last 90 days, then enrich with funding and HQ" runs across all of those sources in one pass and dedupes the result. Leadex is not a GitHub-specific tool; it's a general-purpose research agent, and that's the right framing - GitHub is rich, but it's most useful when you can corroborate what you find there with everything else the open web knows about the same company.

Which is the counter-take, and the part most posts on this topic skip. Users are not buyers. A package.json with your competitor's SDK in devDependencies is a developer trying something out on a Saturday, not a procurement decision. The buyer for a $40k contract is usually an engineering manager or a VP of Engineering one or two layers above the GitHub identity that committed the import. Stars are worse - the fake-star economy is now thoroughly documented, and a 157,000-star repo with a fork-to-star ratio under 1% is mostly a popularity-contest artifact, not a usage signal. Even forks and dependents have well-known false positives: tutorials, course assignments, abandoned proofs-of-concept, vendored copies. The only way GitHub-derived leads work in a real outbound process is to treat them as one trigger in a stack, the way the 30+ buying-signal triggers post lays out. Pair the import with a recent funding round, a VP-Eng hire, or a tech-stack displacement signal and the conversion math starts to make sense; treat the import alone as a buying intent and your reply rate will tell you the bad news in week two.

The practical version of all this is shorter than the theory. Pick one technology your product replaces or complements. Use code search to find ten to fifty repos that import it (sharded by language and creation date because of the 1,000-result cap). Resolve each repo to a company via the committer-email-domain rule with a LinkedIn cross-check. Score the resulting accounts against a second non-GitHub trigger - hiring, funding, exec change, public stack switch - and only sequence the ones that clear both gates. Keep a separate, larger pool from the dependents page for nurture and for prompt-based prospecting when you have a specific brief to run. The yield from this is small per query and very high per qualified lead, which is the inverse of what most prospecting tools optimize for and the reason almost nobody writes about it.